Everything seems to be ‘AI-Enhanced’ these days. So, here are the six questions that I ask Data Protection vendors when we talk about where AI fits within their solution stacks; today or in their roadmap.

video transcript

Have you noticed that everything now claims to be AI-enhanced? Maybe it is. But if the only thing you have is a chatbot that helps you understand operationally what’s going on in your environment … you’re really missing out.

Backup can be “the great aggregator.”

One of AI’s real promises is its ability to ingest information across silos and correlate it into new insights. That’s a huge accelerant; but there’s also risk — because instead of threat actors having to breach each of your production silos individually, you may have just put everything into one or very few baskets.

So, when I talk to data protection vendors about where AI fits into their platform, here’s what I’m actually looking for:

How is AI improving data protection for your customers? Say you’re backing up 100 workloads. Can AI figure out that three of those datasets contain PII or healthcare information, and adjust retention policy accordingly? Can it identify that some datasets include information about German citizens and therefore should only be stored within sovereign borders?

Is AI helping with cyber resilience? Can it identify entropy as an indicator of an encryption attack, or changes in identity that might reveal elevated access for threat actors? And when it spots those bad behaviors, can you granularly restore around them? How is AI helping identify clean restore points in the first place?

Most of us already struggle with which AI tools to use — there are so many choices. So is your platform’s AI yet another interface to learn?, or can other AI-enhanced frameworks interact with and manage your stack?

Building on that: where is your control plane and your preferred repositories relative to where the AI model actually lives? Is everything self-contained within your stack, or are you exposing backup repositories to third-party clouds? I’m a fan of authentic integrations but I love when you build it within your own codebase, but I have little to no confidence when you buy something incongruent and magically promise there some kind of better together.

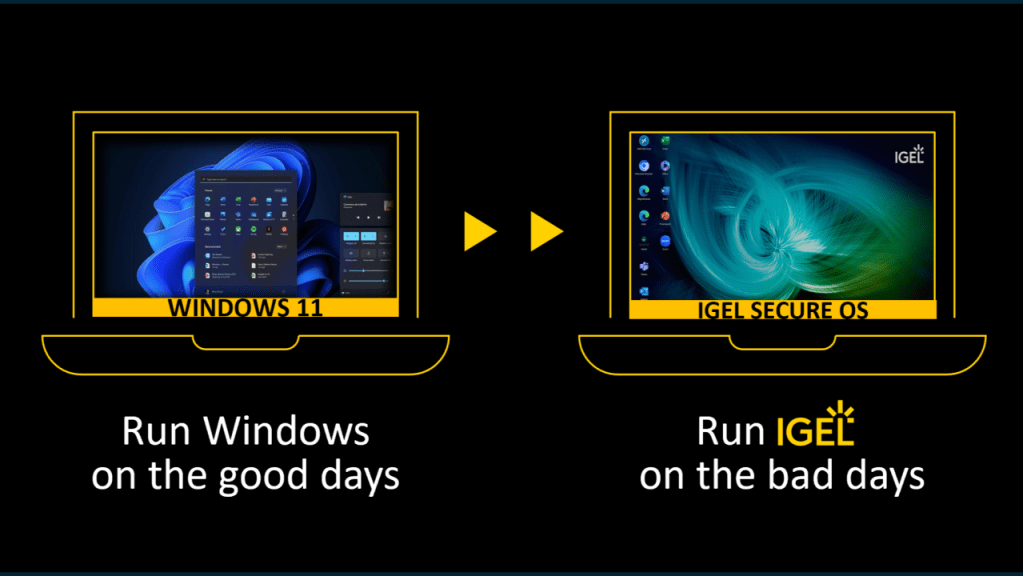

Conversely: anything your business processes rely on in production needs to be protected and assured for resilience. So, what about your production AI infrastructure itself? If you’re running AI workloads on premises, can you protect them? Can you restore them to known good configurations when something goes sideways?

Finally: we know AI is compounding the threats and vulnerabilities that were already overwhelming most organizations. So specifically — what can your data protection solution do to help combat that?

I don’t have all the answers. But I do have more questions; and frankly, so do some of DPM’s clients. That’s why DPM will be surveying 600+ IT decision makers on what they are looking for in how AI is or will be enhancing their data protection and resilience stacks. Fieldwork starts in June, but you can get a peek at the topics and questions right now at http://DataProtectionMatters.com/STAR27.

If you were surveying those IT leaders, what would you want to know? Drop your question in the comments.

Leave a comment